Big problems are best solved in small pieces

Frank Sonnenberg

We are working with a finance sector client which is looking to offload some of its production MIPS to the more cost effective Software Defined Mainframe (LzSDM). Like most finance sector clients the organization is extremely risk averse, very comfortable in the long history of reliability and performance provided by the legacy mainframe environment. My job as Senior Client Engineer is to convince the client that LzSDM can help reduce their dependency on the mainframe, with a low-risk, incremental migration process. How do I do this? Via a demonstration of the capabilities of LzSDM really running its own production workload.

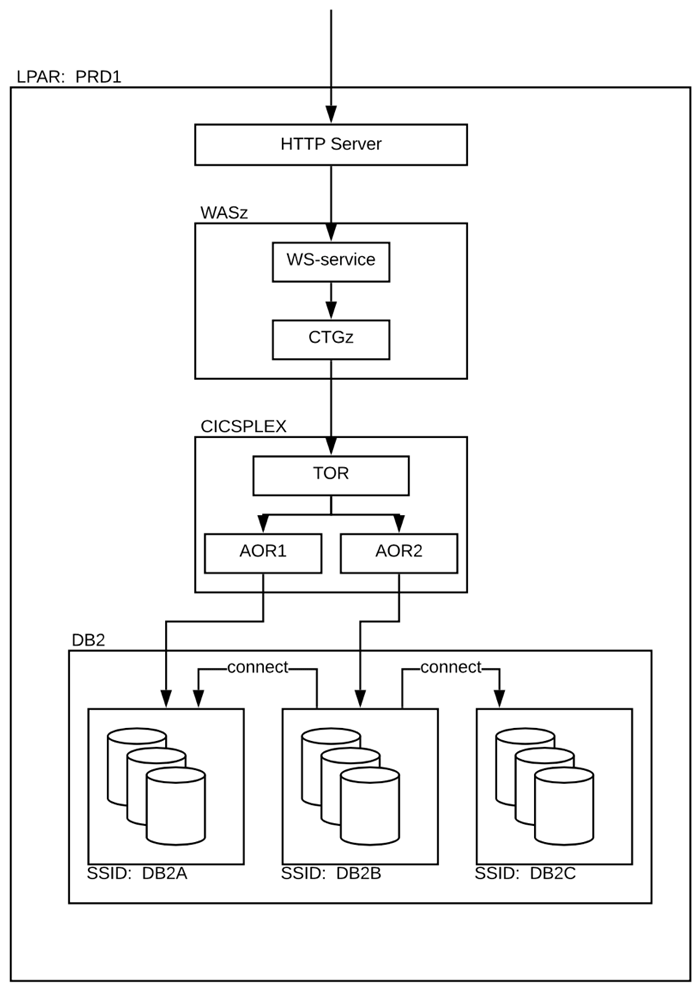

The shop is a decent sized CICS, COBOL and Db2-based set of applications – a literal ‘sweet spot’ for LzSDM with our coverage of LzOnline, LzRelational and LzBatch product sets. However, like many organizations carrying the legacy environment built up during the 70’s and 80’s, the company went through the ‘first wave’ of IT modernization over the past 20 years via the path of adding a web facing layer over the top of the legacy systems. So we deal with Websphere Application Server (WAS), CICS Transaction Gateway (CTG), an Enterprise Service Bus (ESB), Distributed Relation Data Access (DRDA) each providing access points to a host of other systems wanting to interact with the underlying CICS, COBOL and DB2 applications. After analysis, an environment looking similar to the picture below emerges:

As LzSDM is a binary compatible replacement for the legacy mainframe services provided by CICS and Db2, slotting into this environment, while complex, is doable. In fact, the complexity of this multi-layered architecture makes LzSDM an ideal candidate for offloading the work, and (as far as we know) the only way to do so in piecemeal fashion, which helps create trust in the solution. Like all trust, it is earned, slowly and at first in bite sized chunks.

In this environment the legacy CICS has effectively become a provider of web-services, consumable by the user and client facing systems. Some of these web-services are more or less critical than others, allowing the client to choose their appetite for risk by selecting only certain web-services to be offloaded into the LzSDM domain, and piece by piece in a controlled and testable manner.

Your data remains the same

On the subject of testing, obviously critical to a successful mainframe migration project. The LzLabs promise is that your data remains the same as it did on the legacy environment, your VSAM is still VSAM, your GDG’s are still GDG’s and everything is still in EBCDIC encoding and with Big Endian alignment as well as the internal representations of Zoned, Packed and Floating Point numeric data. All of these unique aspects of the legacy mainframe environment have a significant impact on the way your applications process their data.

When migrating to non-mainframe environments that change these qualities (e.g. x86 is Little Endian and ASCII encoding), comparison of the before and after for execution results become problematic. The LzLabs promise completely sidesteps this massive challenge by facilitating the direct comparison between the legacy execution results and those achieved within the SDM – they will be the same. We leverage this capability to smooth and de-risk the process of mainframe migration – capturing baseline database snapshots and transaction execution results before and after from both the legacy machine and then LzSDM for automated comparison.

Requiring an army of testers to validate that your critical business logic has been re-implemented correctly in the new environment is no longer necessary, rather it becomes simply a part of the migration that these comparisons are repeatedly done as BAU during the migration project.

Simplest web-services first

Back to web-services, a partial migration of selected services involves firstly selecting the candidate services. We learned that some of these are ‘read-only’, in that they access data within Db2 but do not change data. Others do update data within the legacy database, and some web-services invoke complex table joins and other potentially long running queries. We select to work with the simplest, and initially read-only, web-services first, offloading this processing to an SDM configured with the executable load libraries and the configuration of the relevant CICS regions transferred to an LzOnline instance.

As these are read-only services one approach we could have taken is to mirror the required data between the legacy Db2 and the LzRelational database instance, likely employing a data propagation or replication tool.

Each environment would contain its own local copy of this reference data, and the copy within the legacy environment would be considered the single point of truth – replicated frequently to the other copies. However, as the client intends to continue offloading more complex web-services and transactions eventually, we chose instead to keep data on the legacy machine and access it remote from the LzSDM instance(s) during the initial phase of migration.

Foreign Data Wrapper

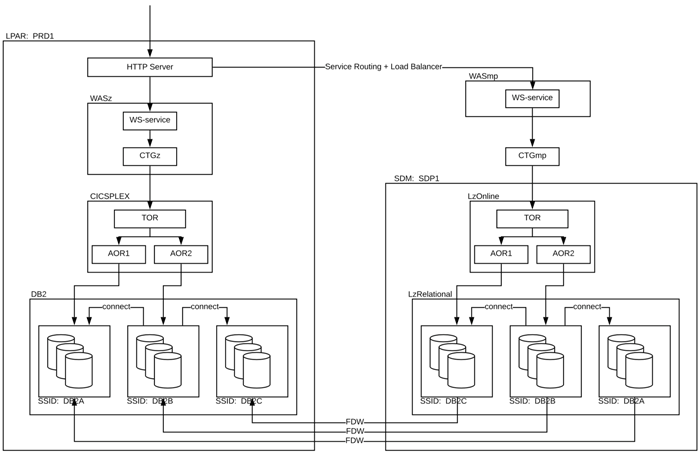

Without requiring any change to the client’s application code, executing the same binary load modules that are concurrently in use on the legacy mainframe, and the LzLabs developed SQL Management of External Data (MED) implementation between Postgres and Db2, aka the ‘Foreign Data Wrapper’ or FDW, the diagram below emerges.

The FDW implementation provides the capability for Postgres to reach into the legacy Db2 for a table catalogued locally and thus we maintain data integrity while the web-services remaining on the mainframe also continue to access the same data. Leaving the data on Db2 for the moment, while transferring the workload from CICS to LzOnline, helps us to gradually establish the trust needed in such a cautious client that LzSDM really can migrate complex applications incrementally to a modern platform, whilst sustaining seamless interoperation with its mainframe.

Slowly we build up a suite of web-services that scale out to a load balanced set of LzSDM instances, all processing parts of the client’s total workload. Next, we move to the transactions and services that update data. It’s a work in progress, but it should be possible to eventually migrate the entire estate from mainframe to Software Defined Mainframe, one step at a time.