The most technologically efficient machine that man has ever invented is the book

Northrop Frye

If more people still owned the book that documents the configuration of their mainframe applications, it would make the life of a mainframe rehoster much easier.

Indeed, some of the mainframe technologies we see every day may have last been documented using pen and ink. Many in our industry would argue that source code repositories and application dependency maps would serve a better purpose. It would be nice to have those as well…

For many of our customers the mainframe is in many cases, a mystery. Application owners are retiring, leaving behind them a matrix of confusing and only partly documented systems, accompanied by a myriad of third-party software products each of which does…. something. Maintaining these applications and licensing these products costs a fortune and usually provides functionality so critical to business processes that enterprise architects daren’t touch them for fear of interrupting the church of the mainframe forever.

In this new blog series, we will give you an insight into the technologies and processes we find as we help these companies move from Business as Usual ‘BAU’ (what am I running, what does it do and who should manage it?); to Application Modernization, ‘AM’ (here’s what I should/shouldn’t keep, here’s what should run where and here’s how we might modernize it).

Locked-in to legacy services

Of course, you might argue that as a provider of mainframe rehosting technology, we’re biased in our perception of AM. We see a landscape where legacy mainframe infrastructure consumes a disproportionately large amount of IT budget while providing fewer and fewer modern services. Although, due to the critical nature of these legacy services, the customer is effectively locked-in, and has little hope of escaping this critical environment (and its associated costs!).

This situation has created strong resistance to moving entire workloads en-masse to modernized platforms such as cloud. As legions of past projects will attest, mainframe replacement initiatives are typically high risk, have high failure rates, and are inevitably more expensive than estimated. This scenario leaves only one practical approach to a mainframe rehoster such as LzLabs; partial, iterative, migration with interoperability along the entire path. We typically start by moving individual applications, one piece at a time, to modernized platforms that are much more readily understood and easier to manage, whilst being significantly less expensive to maintain, both in terms of hardware, software and people-ware.

Logical intersection points

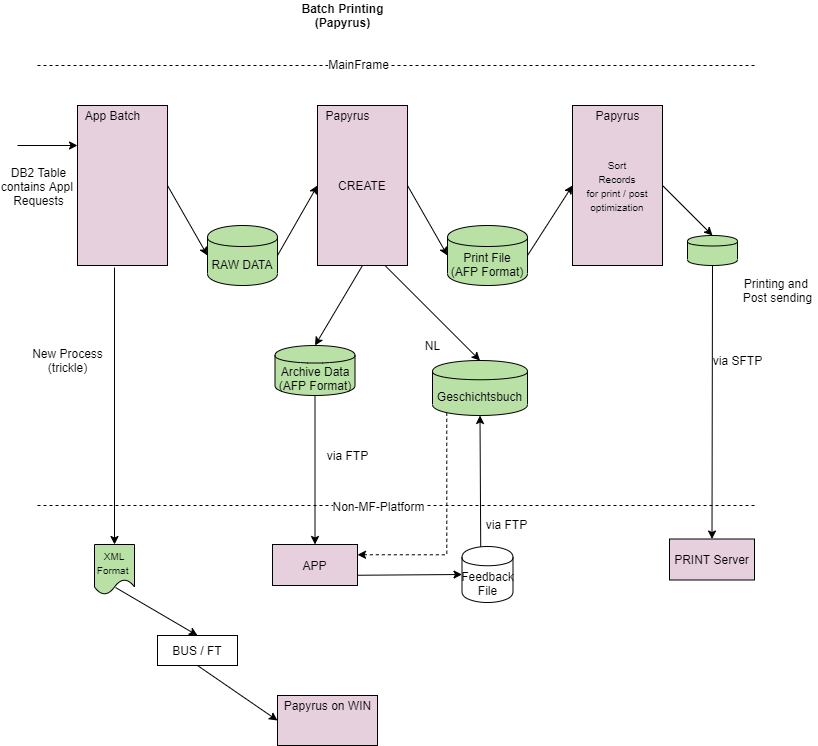

When rehosting mainframe apps in distributed environments, we don’t try to replicate mainframe processes, because distributed process and solutions work differently. Rather, we look for logical intersection points where the output from the mainframe system and applications can be fed into an entirely modern way of solving the same business problem. As an example that we often encounter in client systems, let’s look at printing applications – in this case, a printing architecture we recently discovered at a large European insurance firm.

Printing might seem a straightforward place to start for any digitization and modernization effort. However, the Insurance industry has been a bit of a laggard in this respect, with many preferring the continued printing of things like policy documents and contracts. Although these organizations may be among the last to go “paperless”, there are still plenty of stages in the document production process where policy documentation is available in digital formats.

The reality of the typical document generation process is that it is complicated and multifaceted. A multitude of third-party products are woven into mainframe architectures to control aspects of the legacy environment Advanced Function Presentation (AFP) suite. In our example, the customer’s printing application was dependent upon third-party products for document composition together with Papyrus Server for final print and output management. Very little was known about how this architecture worked: How does it print? What does it print? Where are the commands given & received and when does it go onto paper (or not), and how is this decided?

Architecture map of printing application

As a preliminary step, working with the clients Enterprise Architecture and Mainframe Services teams, LzLabs Discovery specialists developed an architecture map of the company’s printing application and the roles played by applications in the enrichment of documents within the legacy environment (see figure 1)

Once the applications functions and dependencies were understood, the client wanted to explore whether distributed solutions could achieve the same (or better) results. The analysis laid the baseline for future modernization, wherein new technologies could make “paperless” processing a more realistic and achievable goal.

Non-mainframe printing solutions

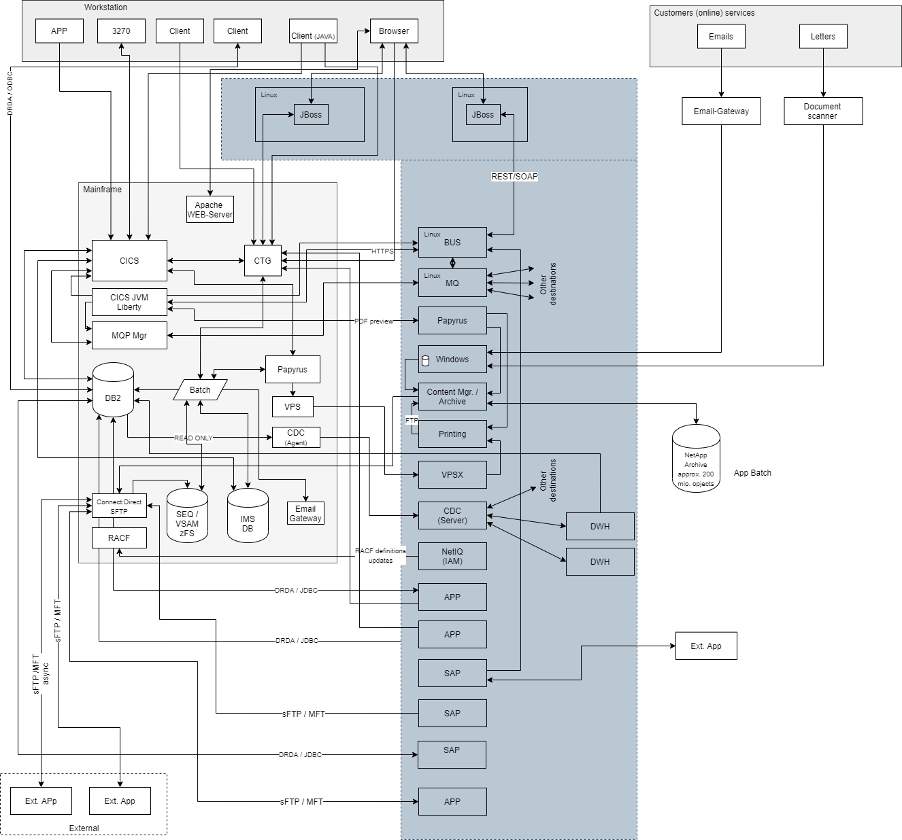

As with many mainframe utilities, comparable non-mainframe printing solutions were available and could be integrated with the client applications that generate material for printing, after migrating these to the LzLabs Software Defined Mainframe® (SDM). As shown in the architecture diagram below, using our Linux implementations of the LzOnlineTMand LzBatchTMsubsystems, and implementing a PostgreSQL database in place of DB2® and IMSTM DB management systems, we were able to deploy the customer’s printing application, together with its existing architecture components, in a distributed environment. From here, the client can take advantage of modern technologies to adapt printing incrementally, for example integrating alternative Content Management Systems, enabling end users to manage documents themselves, including additional interactive elements, and so on

This example is our first insight into the process of rehosting mainframe applications on open systems to help drive “straightforward” innovation that would be a real challenge in the legacy environment. As this series continues, you’ll learn more about the environments and technologies we encounter, and how we help our customers overcome the complexities of modernizing them.