Proving technical feasibility of a mainframe migration solution is no mean feat. In this blog we will analyse how we work hand-in-hand with our customers to assure them that decades old, highly reliable systems, can run in an open systems environment. We will also explore why the term “technical feasibility” can have a very different meaning from one project to the next.

Defining technical feasibility – understanding your environment

When rehosting application binaries on x86 or the cloud, feasibility is defined as achieving “equivalent behaviour” in the target environment. A given input must return the same result on our LzLabs Software Defined Mainframe® (LzSDM®) as it does on the legacy mainframe.

To achieve this, we take a four-step process as part of our discovery approach – LzDiscover (read more about LzDiscover here):

- Binary analysis: this step ensures that all programming languages and library functions, within the mainframe binaries to be rehosted, are known and can be supported on LzSDM.

- Source code analysis: program sources, database definitions, job control scripts, and various other configuration settings are checked for support on LzSDM.

- Workload analysis: how is workload driven into the online systems? Is it via transaction monitors such as CICS® or IMS™ or other started tasks? Which communication service is used to trigger transactional processing – SOAP/XML, Transaction Gateway, MQ or proprietary processes? Batch processing is analysed for usage of special tools and to see how it is scheduled or triggered.

- Mainframe tools: there is a range of ways to replace mainframe tools with open-source or Linux versions. To do this we also need an understanding of the client’s future platform strategy.

Full vs. partial migration

Our next step, in conjunction with the customer, is to decide upon migration approach. Options are either a full migration (otherwise known as “big bang”) or partial migration – meaning the dissection of application groups or even parts of applications to migrate piece by piece. This decision is determined by the risk appetite of the customer, which we generally assume to be low given the critical nature of mainframe systems.

On the surface, partial migration reduces project risk, however it can increase overall complexity.

When mainframe applications are dissected and migrated to different environments, interfaces must be created that didn’t exist before (or they existed but had no network in between). For one of our customers – BPER Banca – this meant that several online transaction regions were migrated to LzSDM and the database tables remained in Db2® format. This approach was the most palatable for the company, as critical services could be switched between LzSDM and mainframe during migration, with minimal service disruption.

Interfaces between such components require connections, impacted by latency and bandwidth. In a typical customer engagement, we must ask: is it technically feasible to divide these applications? Might “big bang” present a more compelling business case? These questions feed into the notion of technical feasibility and are determined as part of our discovery.

Measurement during migration

Once discovery is complete, and a migration approach agreed, the project can begin. Proving technical feasibility does not stop here though. “Equivalent behaviour” as defined in the introduction must be demonstrated consistently to ensure LzSDM and its surrounding architecture delivers the business results our customer expects.

Sustaining performance is important: for online transactions, end user response times and overall transaction throughput must be sustained. For batch workload, it is important that a given batch window is not exceeded.

We measure these elements through testing. Test tooling allows automation of unit tests, integration tests and complete system tests. Comparisons can be carried out on datasets, databases, transaction logs and on end points. For BPER Banca, we performed automated testing with scripts of over 6,000 individual test cases, comparing business performance on mainframe vs. LzSDM to ensure sustained service levels.

Our unique no recompilation approach makes automated testing straightforward and speeds up the project significantly. Any approach based on recompilation of source code would require comparison of EBCDIC encoded results on-mainframe with those encoded in ASCII in the new environment – for lower-level comparisons, the scenario of automated testing would be practically impossible.

Service Offloading as a first migration step for MIPS saving

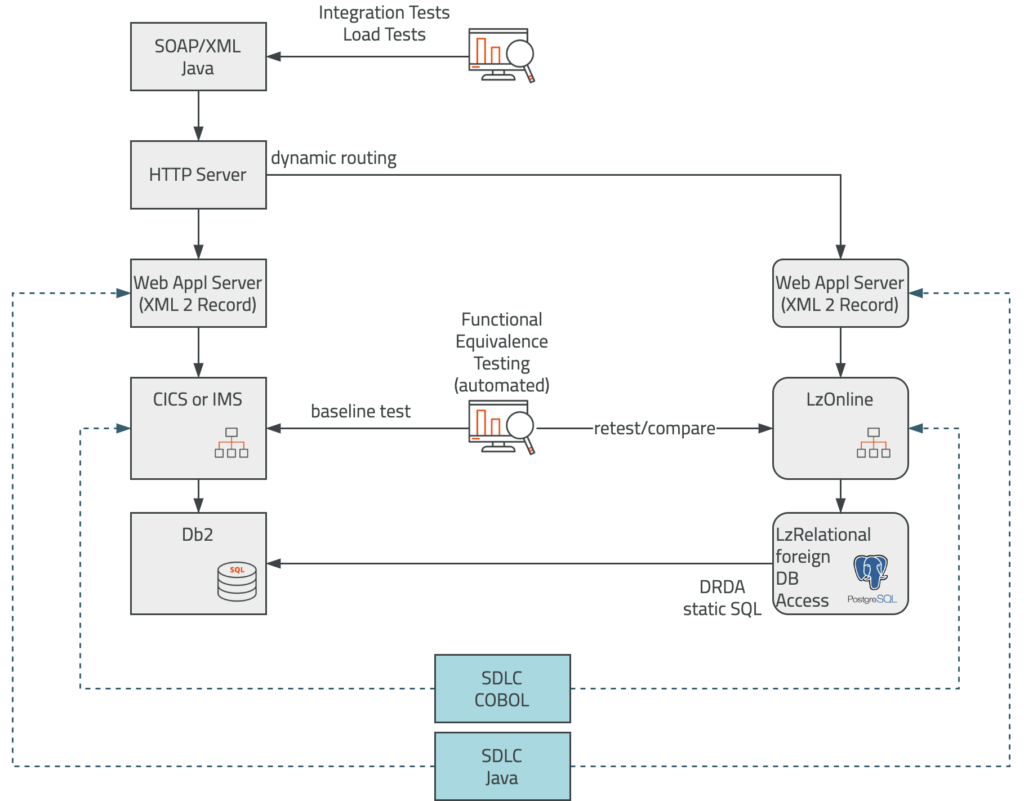

In a partial migration, the SDLC (Software Development Lifecycle) on the mainframe is extended to LzSDM to promote the load modules and bind packages at the same time. As soon as functional equivalence tests are completed, the integration and load tests can start to assure the external components work well after service rerouting to LzSDM. The go-live cutover can be organised service by service.

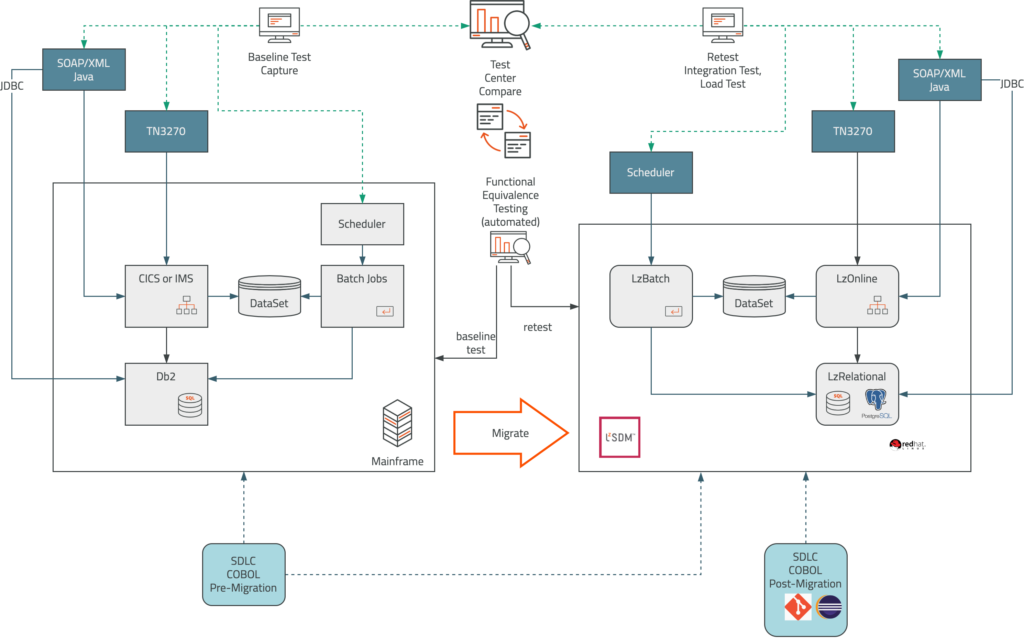

Full Migration with Automated Testing

Again, testing is the main focus for a migration to LzSDM. To reduce the freeze window for applications as much as possible, the SDLC remains on the mainframe during migration and extends the promotion of load modules and other artefacts to LzSDM. After migration, the application source code and other artefacts (JCL, DDL, configuration files) will be migrated to LzWorkbench, an Eclipse-plugin for COBOL or PL/1 code development on LzSDM. Sources may be maintained with Git, Subversion or similar tooling.

More than feasible

With no recompilation or data changes required, the technical feasibility of a mainframe migration project increases significantly. With a strong understanding of your environment, migration approach and consistent testing, you’re set for success.

Our role is to work closely with you to demonstrate equivalent behaviour in the target environment, whilst building trust that our knowledge of mainframe and open systems environments will deliver an architecture that will serve your business for years to come.