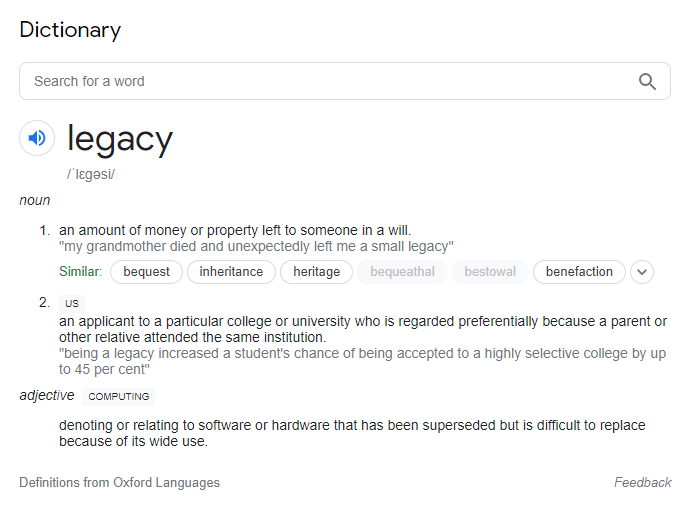

In areas except computing, “legacy” carries some positive connotation. Generally, it speaks of benefits realized today, as a result of things that have happened in the past.

Negative perception of legacy

In computing, legacy is perceived negatively. Many sources refer to legacy systems as meaning hardware or software that is superseded, outmoded, obsolete etc. This article discusses why this has become the case; and asks whether an alternative view might lead to a better outcome for critical, system-of-record applications, which sometimes fall foul of the term legacy.

There is an important distinction between legacy systems and legacy applications where this subject is concerned. While the two terms are often used interchangeably, they have a very different bearing on the question of legacy. So much so, that to continue the conflation is likely to result in the baby being thrown out with the bathwater.

Legacy applications are carefully crafted, business-oriented programs that underpin many important commercial systems in use globally. These applications have become brittle as institutional knowledge of the programs has left the workforce. Furthermore, a patchwork quilt of enhancements, built overt time without the benefits of a modern development pipeline, have impaired their maintainability.

Hardware, software and human infrastructure

Legacy systems are the hardware, software and human infrastructure upon which those applications depend in order to evolve and execute.

The colloquial use of the term legacy is most commonly found in the context of applications that pre-date the open-source era (more on this in a moment), specifically those applications that were originally written in the 70s, 80s and 90s. These legacy application assets, the creation of which was funded many years ago, have been sweated effectively over decades of use, and have provided a return on their initial investment many times over.

Have these applications, which continue to provide significant value to organizations, been superseded by something better? Are improved and cost-effective alternatives realistically within reach? It may be the case on the margins, but for some of the core, systemic applications that power world commerce, “legacy”, as applied to computing, would be more accurately defined in the mainstream way. Yet, the notion that legacy is a problem has become conventional wisdom.

Vendor lock-in

So, what is it about applications, originally developed in the 70s, 80s and 90s, that seems to define the “negative legacy” sentiment so acutely? My view is that it’s far less about the applications being superseded than it is the constraints under which they can evolve. When legacy applications were originally coded, there were few language and deployment choices available. Little consideration was given to the long-term impact of locking an application into a particular vendor’s proprietary hardware and software infrastructure. The consequence today is that further development of these applications is largely dependent upon enhancements to the legacy system infrastructure. Enhancements determined by the infrastructure vendors’ own commercial interests. The notion of vendor lock-in, both technically and commercially, is well-studied in the broader economic sense[1], and it certainly applies in the case of legacy applications.

The Cambrian explosion in open-source activity, which started around the turn of the millennium, was somewhat in response to this lock-in. The resulting increase in choice for development and deployment of commercial applications has been extraordinary. Open-source infrastructure is built collaboratively, which leads to greater standardization and interoperability. Developments such as virtualization, containerization and software-defined technology have broken the link between an application and the infrastructure upon which it would otherwise depend. Consequently, infrastructure improvements focus on benefits to the overall development ecosystem, rather than locking applications further into a particular vendors proprietary toolset. Applications that have this choice available for their future development do not attract the term “legacy”, regardless of their age.

Finally, to illustrate the point, try this thought experiment. Imagine if your legacy applications could be:

- Maintained and enhanced by programmers of any generation

- Improved using your choice of programming language or open-source project

- Enhanced as quickly as any other application in your environment

- Run in any cloud environment and benefit from cloud native infrastructure

Would the computing definition of legacy still seem appropriate? This line of thinking suggests that solving the legacy application problem, is more about restoring infrastructure choice than simply assuming the application has been superseded.